Tianyi Alex Qiu

Hi! I am Tianyi :)

[My Priorities] I study uplift of human truth-seeking and moral progress in human-computer interaction - what I believe to be among the most important problems of our time.

[My Toolbox] By training, I am a computer scientist and statistician. By disposition, I am an avid experimentalist, but loves to dig deep into my findings in search for a generalizeable & falsifiable theory. I also learn from economists (i) how to do reliable causal inference and (ii) how to think about multi-agent interactions. After all, both fields study complex systems (humans, computers) embedded in larger complex systems (societies).

[My Whereabouts] I am a research fellow with the Oxford Faculty of Philosophy (HAI Lab) and incoming PhD student at Stanford CS. I previously did research at UC Berkeley, have been a member of the PKU Alignment Team, and was, until recently, an Anthropic Fellow working with the Societal Impact and Alignment Science teams. I mentor part-time for the Supervised Program for Alignment Research - please also feel free to cold-email me if you'd like informal collaboration!

Selected Works

Project: Facilitate progress

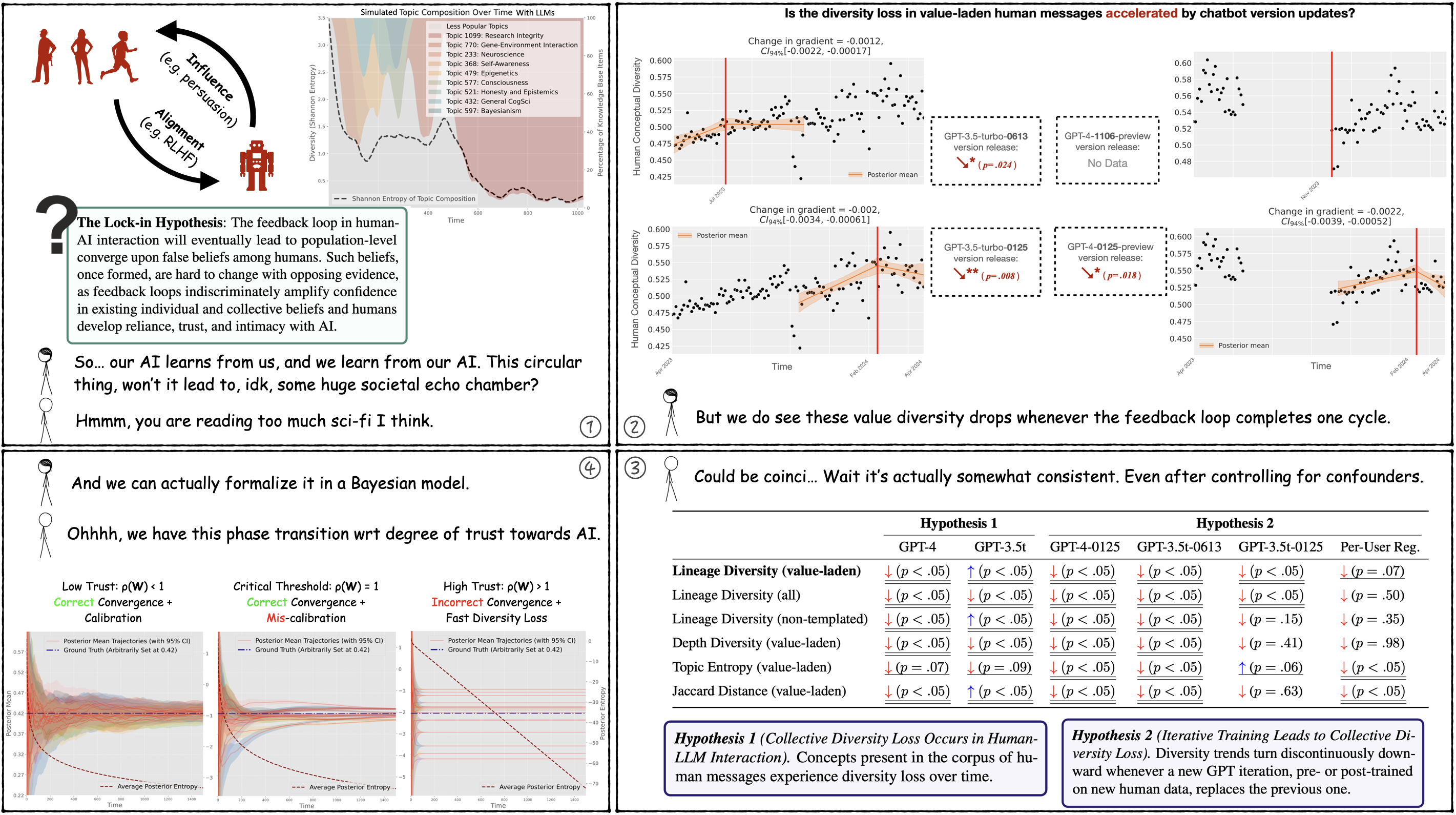

Here are some silly xkcd-style comics on what we call the lock-in hypothesis.

The Lock-in Hypothesis: Stagnation by Algorithm (ICML'25) Tianyi Qiu*, Zhonghao He*, Tejasveer Chugh, Max Kleiman-Weiner (2025) (*Equal contribution)

Danger (pre-mature value lock-in): Frontier AI systems hold increasing influence over the epistemology of human users. Such influence can reinforce prevailing societal values, potentially contributing to the lock-in of misguided moral beliefs and, consequently, the perpetuation of problematic moral practices on a broad scale.

Solution (progress alignment): We introduce progress alignment as a technical solution to mitigate the danger. Progress alignment algorithms learn to emulate the mechanics of human moral progress, thereby addressing the susceptibility of existing alignment methods to contemporary moral blindspots.

Infrastructure (ProgressGym): To empower research in progress alignment, we introduce ProgressGym, an experimental framework allowing the learning of moral progress mechanics from history, in order to facilitate future progress in real-world moral decisions. Leveraging 9 centuries of historical text and 18 historical LLMs that we trained, ProgressGym enables codification of real-world progress alignment challenges into concrete benchmarks. (Hugging Face, GitHub, Leaderboard)

ProgressGym: Alignment with a Millennium of Moral Progress (NeurIPS'24 Spotlight, Dataset & Benchmark Track)

Tianyi Qiu†*, Yang Zhang*, Xuchuan Huang, Jasmine Xinze Li, Jiaming Ji, Yaodong Yang (2024) (†Project lead, *Equal technical contribution)

We introduce the Martingale Score, an unsupervised metric based on Bayesian statistics, to show that reasoning in LLMs often leads to belief entrenchment rather than truth-seeking, and shows this score predicts ground-truth accuracy.

The key property we rely on: under rational belief updating, the expected value of future beliefs should remain equal to the current belief, i.e., belief updates are unpredictable from the current belief.

We think belief entrenchment is the major reason why LLMs are causing delusions and even psychosis in users today, and, if the root cause remains unaddressed, it may cause more serious epistemic harm at an even larger scale. Based on this work, we aim to extend the same metric to human-AI interaction, and to use it as a training remedy to belief entrenchment.

Stay True to the Evidence: Measuring Belief Entrenchment in Reasoning LLMs via the Martingale Property (NeurIPS'25)

Zhonghao He*, Tianyi Qiu*, Hirokazu Shirado, Maarten Sap (2025) (*Equal contribution)

Project: Theoretical deconfusion

Alignment training is easily undone with finetuning. Why so? This work proves, theoretically and experimentally, that further finetuning degrades alignment performance far faster than it degrades pretraining performance, due to the much smaller amount of alignment training data. We operate under a data compression model of multi-stage training.

Language Models Resist Alignment: Evidence From Data Compression (Best Paper Award, ACL'25 Main)

Jiaming Ji*, Kaile Wang*, Tianyi Qiu*, Boyuan Chen*, Jiayi Zhou*, Changye Li, Hantao Lou, Juntao Dai, Yunhuai Liu, Yaodong Yang (2024) (*Equal contribution)

Classical social choice theory assumes complete information over all preferences of all stakeholders. It's not true for AI alignment, nor for legislation, indirect elections, etc. Dropping such an assumption, this work designs the representative social choice formalism that models social choice decisions based on a mere finite sample of preferences. Its analytical tractability is established with statistical learning theory, while at the same time, Arrow-like impossibility theorems are proved.

Representative Social Choice: From Learning Theory to AI Alignment (Best Paper Award, NeurIPS'24 Pluralistic Alignment Workshop; in press at the Journal of Artificial Intelligence Research)

Tianyi Qiu (2024)

It is well known that classical generalization analysis doesn't work on deep neural nets without prohibitively strong assumptions. This work tries to develop an alternative: an empirically grounded model of reward generalization in RLHF that can derive formal generalization bounds while taking into account fine-grained information topologies.

Reward Generalization in RLHF: A Topological Perspective (ACL'25 Findings)

Tianyi Qiu†*, Fanzhi Zeng*, Jiaming Ji*, Dong Yan*, Kaile Wang, Jiayi Zhou, Han Yang, Juntao Dai, Xuehai Pan, Yaodong Yang (2025) (†Project lead, *Equal technical contribution)

Talks & Reports

[Talk] The Lock-in Hypothesis and Truth-Seeking (Jul 2025)

[Talk] Belief Entrenchment and How to Remove it (Jun 2025)

[Talk] The Lock-in Hypothesis: Stagnation by Algorithm (May 2025)

[Talk] Representative Social Choice (Dec 2024)

[Talk] Value Alignment: History, Frontiers, and Open Problems (Jun 2024)

[Talk] ProgressGym: Alignment with a Millennium of Moral Progress (Jun 2024)

Get in touch!

Please feel free to reach out! If you are on the fence about getting in touch, consider yourself encouraged to do so :)

I can be reached at the email address qiutianyi.qty@gmail.com, or on Twitter via the handle @Tianyi_Alex_Qiu.